How to See Through Walls With Off-the-Shelf VPS and Meta Ray-Bans

The same defense capability Anduril sells to the DoD, recreated on consumer gear -- and how you can try it yourself.

Last year, this took a defense contract. This week I did it with an iPhone.

I tracked a person through a concrete wall -- every step, every turn. Centimeter-level accuracy, using just an iPhone and a $300 pair of Meta Ray-Bans tied into off-the-shelf VPS software.

Try it yourself → https://github.com/bilawalsidhu/see-through-walls

What a Defense Contract Buys

A few months ago, Anduril dropped EagleEye -- an XR headset built for soldiers. It overlays a live mini-map in your field of view, auto-tags friendlies and threats using AI, and yes, lets you see people through walls by fusing sensor data across the squad. It’s impressive. It’s also tied to a classified sensor suite and a DoD procurement process.

I wanted to know how close I could get with off-the-shelf hardware. Turns out -- uncomfortably close.

How $300 Glasses Pull This Off

Here’s the problem: Meta’s Ray-Bans are essentially dumb. One camera on your face. No depth sensor, no LiDAR, no SLAM. Every other “smart” headset has a dozen sensors figuring out where you are in space. The Ray-Bans have nothing.

The answer is something I spent years working on at Google: VPS -- Visual Positioning System. I made a video on this previously: VPS Explained

You pre-scan an environment. Build a 3D model. Then, when someone walks through that space, the camera feed gets matched against the model in real time. Every frame: based on what you’re seeing right now, where must you be standing? Down to the centimeter. Six degrees of freedom on glasses that can’t even find themselves.

Google built a version using Street View imagery across 100+ countries -- the ARCore Geospatial API I helped ship. But that only works where Street View has been. Your office, your home, a factory floor -- that’s where MultiSet comes in.

See it in action in the full video:

They’re the only platform publicly supporting the Meta Ray-Ban SDK. Already earned “Most Robust” in AREA’s 2025 Enterprise VPS Report, ahead of Niantic. The company is barely a year old. Scan the space, upload it, and the glasses just work. Centimeter accuracy. Day or night. Live.

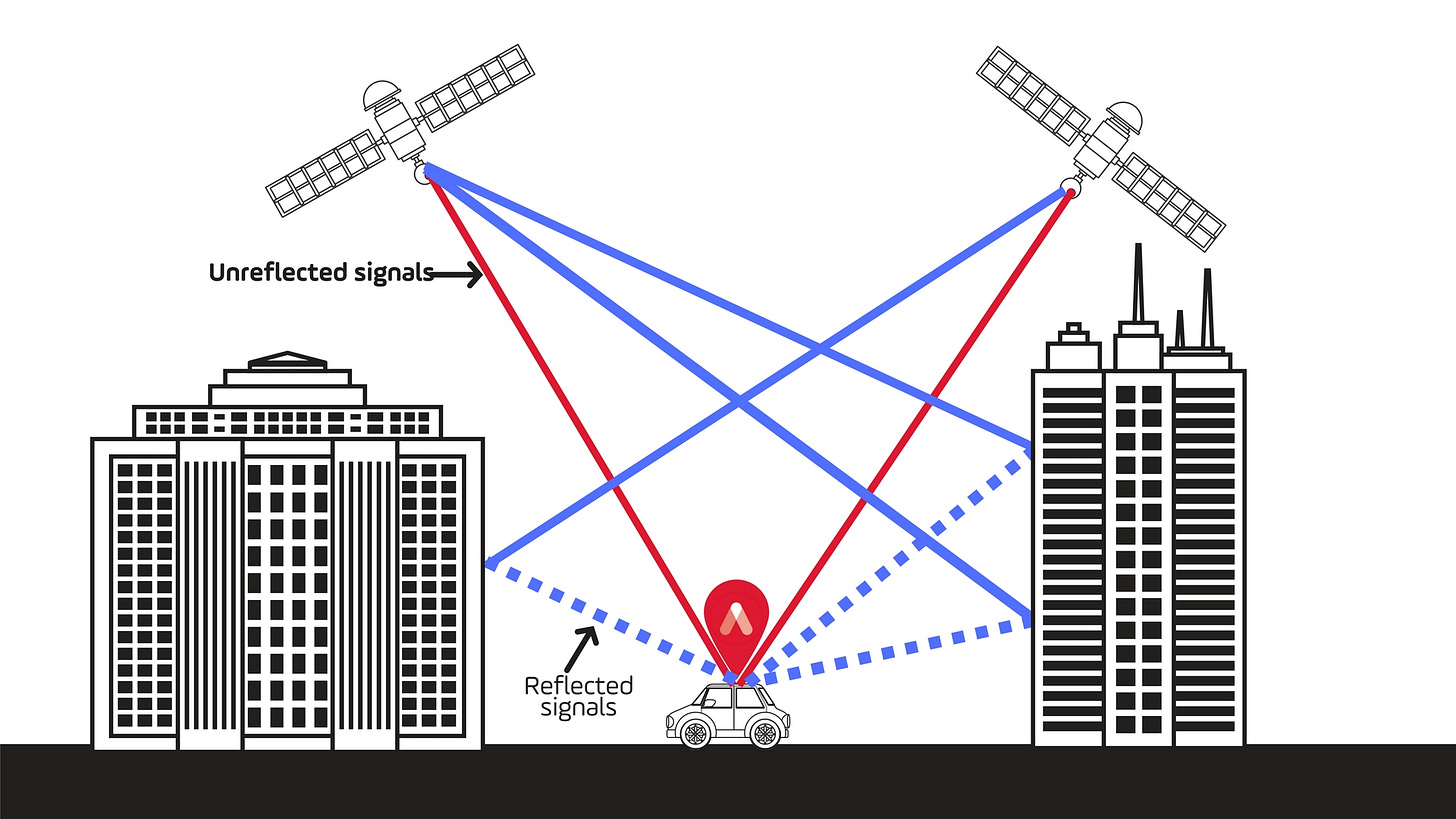

The GPS Problem

I know what you’re thinking. Why not just use GPS?

GPS gives you a couple meters of accuracy and maybe 20 degrees of rotation on a good day -- outdoors. Take it inside, and it falls apart. Your blue dot jumping across a mall knows this. Your Uber driver circling a parking garage knows this.

VPS gives you centimeter accuracy, indoors, without beacons, without infrastructure. Scan once, and then any camera in that space knows exactly where it is. Which floor, which room, which direction it’s facing.

Where This Gets Serious

The see-through-walls trick is cool, but it’s just the first application of a more fundamental capability: shared spatial coordinates.

Once every device in a space is anchored to the same 3D map, things start clicking. A technician on an oil rig walks up to equipment and the last repair notes, maintenance checklist, and a remote expert’s annotations pop up in world space -- anchored to that exact spot. Someone in Germany can see the same view and draw on it. Months later, when the next crew rotates in, all that data is still there, floating where it was left.

Drones need this to fly in GPS-denied environments -- war zones where GPS is actively jammed. Self-driving cars run a version of this under the hood. And once you’ve scanned a space, everything operating in it -- glasses, phones, cameras, robots -- shares the same spatial understanding.

Now scale that. Every sensor in a city, anchored to a persistent 3D map. Privately in your home. Publicly on the highway. Access-controlled on a factory floor. The spatial map becomes the operating system for the physical world.

The Dual-Use Reality

This is where every dual-use technology ends up.

The same capability that gives a maintenance crew situational awareness is the same capability a building owner uses to track every footstep. Grocery stores are probably already doing it -- spatial heat maps, dwell-time analytics, attention tracking. Everything websites do with mouse tracking, but in physical 3D space.

MultiSet offers on-premise deployment, so you own all the localization data. Nobody else sees the tracks. That’s the right default. But the technology is capability-neutral. What gets built on top of it is the part that matters.

The Real Takeaway

I went into this wanting to see if consumer hardware could pull off military-grade spatial tracking. It can. A camera is all you need -- just a pre-scanned map and a phone.

I’ve been in this space since 2013, and we’ve still got a ways to go on the glasses themselves. But the next five years of AR and VR won’t be won on hardware. They’ll be won on the spatial map that pulls all these systems together.

If this gave you something to think about, share it with someone who should see it.

- Bilawal

This is not what I expected to find just casually searching Substack to see who is using Meta RayBans for what these days. Eep.

Can someone type the hyperlink below?