The AI Primitives You Need To Know – Harvard Lecture

The AI-driven systems of creation that will shape tomorrow.

Spatial and visual AI tools are evolving into the foundation of a new creative stack. Photogrammetry, NeRFs, and Gaussian splats are turning reality into editable digital space. AI-driven pose estimation, segmentation, depth inference, and relighting are reshaping how performances and scenes are captured. Hybrid workflows using tools like Beeble or Move.ai integrate directly with Blender and Unreal Engine—bridging generative speed with hands-on control.

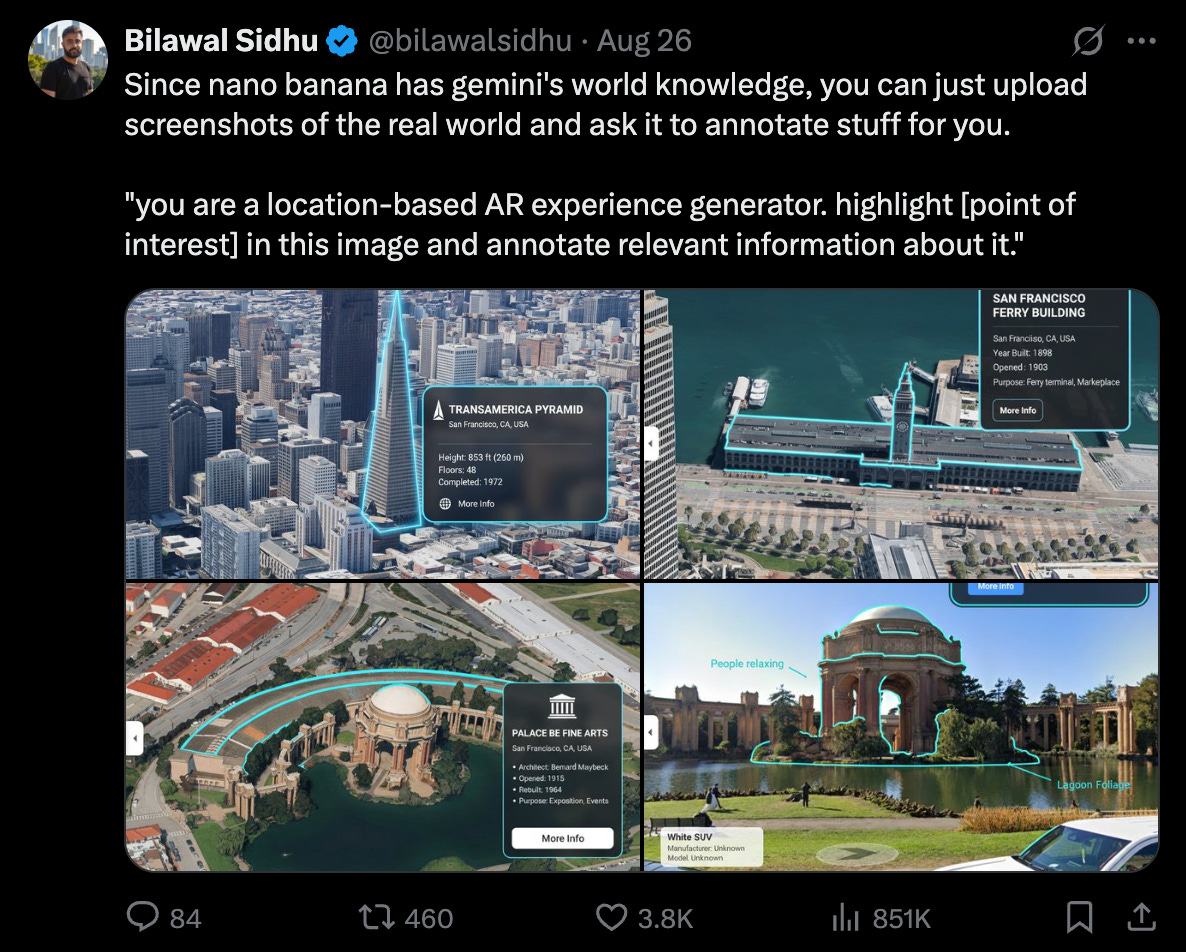

Together, these shifts mark the rise of the content-to-content paradigm, where any input can become any output: a photo into a scene, a recording into a personalized podcast, a map into an AR tour, or a sketch into a generative AI game. Media is no longer assembled piece by piece—it’s authored at higher levels of abstraction, increasingly dynamic, and delivered faster than ever.

In this new video from a lecture at Harvard XR, I break it all down: how spatial intelligence, visual intelligence, and hybrid workflows are converging into this new creative stack. We’ll explore the tools and the bigger picture of what it means for the future of media.

Watch the full lecture to see how creators, studios, and entire industries can harness these AI primitives:

YouTube Chapter Links:

00:00 – The New AI Primitives

00:24 – My Background

01:21 – Automation & Democratization

03:36 – Spatial AI

12:33 – Visual AI

15:40 – Hybrid Workflows

17:59 – The Future of Media

The New Creative Stack

Traditional content creation was a waterfall: specialized tools linked in sequence. AI primitives flip that model—turning complex pipelines into real-time, modular building blocks.

Spatial Intelligence: Photogrammetry, NeRFs, and Gaussian splats democratize reality capture, enabling anyone to digitize spaces at cinematic fidelity.

Visual Intelligence: Pose estimation, segmentation, and depth tools let creators reskin and relight performances without motion-capture suits or green screens.

Hybrid Workflows: With vibe coding and MCP, LLMs will orchestrate existing tools—bridging explicit control with generative flexibility.

The result? Content isn’t just faster—it’s fundamentally different. It becomes “content-to-content,” where any input (text, photo, scan) can become any output (video, 3D world, virtual tour).

Check out some recent discussion over on X about these new systems:

On the Horizon 🔭

We’re heading into a future where content is personalized, dynamic, and disposable—built for you, in the moment, on the platform you choose. This shift will reshape industries from filmmaking to advertising to AR tourism.

Jensen Huang predicts a world where every pixel is generated, not rendered. No matter where you place this on the timeline, the direction is clear: media is evolving into systems rather than steps. The building blocks are already here—it’s up to us to decide how to use them.

If this gave you something to think about, share it with fellow reality mappers. The future's too interesting to navigate alone.

Cheers,

Bilawal Sidhu

https://bilawal.ai

Great insights!

Very thought-provoking thanks Bilawal