Why China Is Winning AI Video — And Are Game Engines Obsolete?

Two SOTA AI video models from China in one week, plus Google's Genie 3 sending gaming stocks into free-fall. Here's what it all means.

China did it again. Actually, they did it twice. In the span of a single week, Kuaishou dropped Kling 3.0, and then Bytedance followed with Seedance 2.0. Two AI video models that are making US models look a generation behind.

Meanwhile, Google’s Project Genie rollout tanked gaming stocks across the board. Unity went down 35%. Take Two, Roblox, and Nintendo are all bleeding. The market is betting on a future where world models like Genie 3 make game engines obsolete.

So let’s talk about all of it, cut through the hype, and find what will have real implications on the future.

Seedance 2.0: The Composition King

For Seedance 2.0, the big unlock is multimodal inputs. Not just text and images, but audio files and video references. You can throw in a collection of assets, and the model composes them together into a coherent generation. I predicted this back in December for audio references. It’s February and it’s already here.

The text rendition alone is the nano banana moment for video. Perfect text, synchronized audio, and consistent characters. If you’re a UI/UX designer who’s been suffering through the Figma-to-After Effects-to-prototyping-tool pipeline just to make a product demo, this could change your entire workflow. Throw your designs into Seedance, and you’re off to the races. We were all so focused on tools like Remotion for hard-coded motion graphics, and video models just made all of that potentially irrelevant. The bitter lesson is bitter for a reason.

Visually, where Seedance really separates itself is in action sequences. Models like Veo have struggled with explosions, muzzle flashes, and high-energy cinematics. I tried doing this stuff with Veo a few months ago and it was painful. Seedance nails it with accurate physics, aerial sequences, and slow-motion damage. The camera motion feels natural and grounded, not that floaty AI drift we’ve all grown to recognize. We’re past the uncanny valley on this one.

Kling 3.0: The 4K Powerhouse

Where Seedance is the composition king, Kling 3.0 is the resolution and consistency king. 4K outputs. 15-second multi-shot sequences with self-consistent characters and environments across camera angle changes. Best-in-class native audio generation. Phenomenal lip sync.

When you compare both Chinese models side-by-side against Sora and Veo on the same prompts, the edge is clear, not just in image quality, but in how natural the voice sounds and how grounded the cinematography feels.

Why China Keeps Winning

The answer is threefold: data, labeling quality, and workforce.

Generation quality is directly proportional to how scared companies are of getting sued. Adobe trains on licensed data only and has no state-of-the-art models. OpenAI made a strategic call with Sora 2 to train on major IP and deal with takedowns later. Google has YouTube. But China trains on absolutely everything under a completely different IP regime. And when you’re open-sourcing the models, there’s nothing anyone can do about it.

On top of that, China has film students doing dense video labeling — people who can describe scenes with actual cinematic vocabulary. That produces fundamentally better training data than more engineering-first annotation used by other labs. As Martin Casado posted, “Models are largely smart people caches. The harder the people work and the cheaper they are, the better the model.”

The only dimension where the US leads is compute. The best chips are still controlled by the US and its allies. But on data and labeling? China’s at parity or ahead.

World Models vs. Game Engines: The Real Question

Now let’s shift gears. Google’s Genie 3 sent the gaming industry into a panic, and the question everyone’s asking is whether game engines are actually cooked.

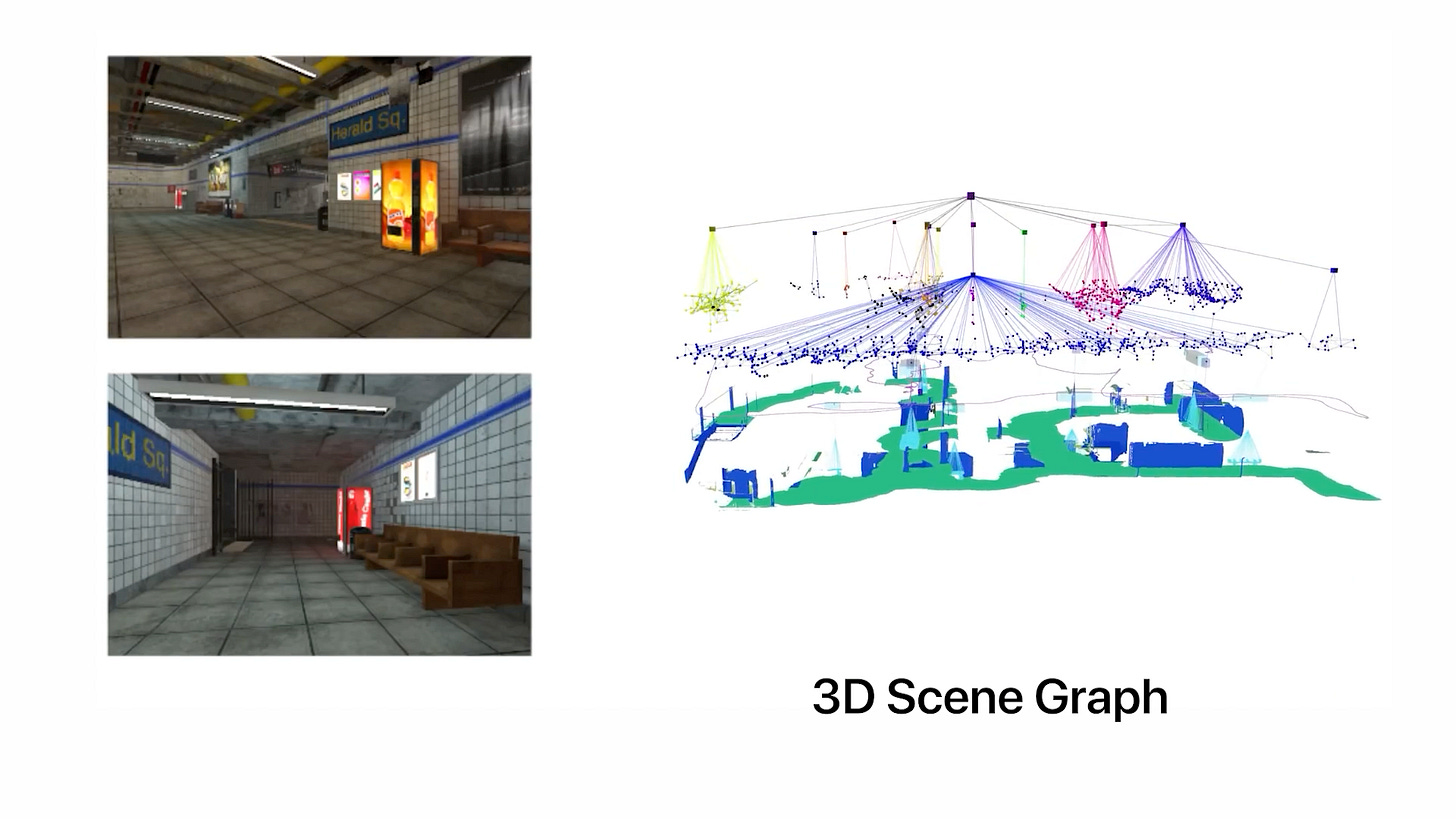

They’re not. Not yet. But the convergence is real, and I think the right framing is explicit vs. implicit approaches racing toward the same goal.

The explicit approach takes existing tools like Unity, Maya, Blender, and supercharges them with AI. Text-to-3D generation, visual language models composing scenes from concept art, AI animation tools like Cascadeur, and LLMs writing your Unreal Engine nodes. The pitch: automate the drudgery while keeping everything addressable and editable.

The implicit approach says screw it, scale the neural network. More data, more compute, and out comes something that eats the game engine for lunch. And Genie 3 is the strongest proof point yet: Google went from training on gameplay footage to a chunk of YouTube, and it just worked. Emergent physics, lighting, and spatial consistency that Genie 2 couldn’t touch.

And here’s what people keep getting wrong: just because Genie outputs pixels doesn’t mean it’s “just 2D.” The 3D structure lives in the model weights. We’ve seen this with NeRFs and Gaussian splats. The medium is new, and the possibilities — stepping inside artwork, walking around a photo, interactive feeds of remixable experiences — are things conventional game engines are actually terrible at.

I keep thinking about self-driving cars. We went from a federation of inspectable classical systems to a black box where pixels come in, and driving policy comes out. That took a decade. Why wouldn’t the same thing happen here on a compressed timeline?

Where I Net Out 🔭

On video models: China has the edge. Seedance 2.0 and Kling 3.0 are both production-ready in ways that US models aren’t. Google has the best shot at closing the gap with the next Veo, but the gap is real, and it’s widening.

On game engines: Rockstar isn’t cooked — yet. Genie is still a slot machine, stochastic and unpredictable. But that’s where things are, not where they’re going. And the companies building world models aren’t actually trying to build the next GTA. They’re building AI that understands the physical world because that’s a fundamental prerequisite for AGI. Reinventing the game engine is just a byproduct of teaching AI how reality works.

That might be the most important takeaway from this entire week.

If this gave you something to think about, share it with fellow reality mappers. The future’s too interesting to navigate alone.

Cheers,

Bilawal Sidhu

bilawal.ai

I’ve been trying to understand how models that output pixels and not intermediate representations would offer enough creative control. Editing with chat alone isn’t going to be it. You make a really useful point about that these representations are in latent space.

Seems like there’s plenty of work to do on editing UI in top of latent space?

Fascinating and sharply argued piece — especially the framing of multimodal “composition” as the real unlock rather than raw text-to-video. The comparison between explicit (engine-based) and implicit (world model) approaches is also a helpful lens for thinking about where this is headed.

For a future article, I’d love to see three issues explored more rigorously:

Benchmarking methodology. When we say Seedance or Kling are “a generation ahead,” what were the prompts, seeds, rejection rates, and post-processing steps? A transparent comparison framework would make the case much stronger.

Enterprise viability and IP risk. If looser data regimes are part of the advantage, how does that affect global deployment, licensing, and brand-safe adoption outside China?

Editability vs. spectacle. How close are these models to deterministic, frame-level control suitable for production workflows, as opposed to impressive but stochastic outputs?

Overall, this was a compelling synthesis — it would be great to see the next piece go one layer deeper into the structural constraints behind the hype.