Your WiFi Can See You. Here's How.

Why RF is the next great sensing frontier — from your living room to low Earth orbit.

Your WiFi router can already detect when somebody walks through your home.

Not with a camera. Not with a sensor you bought. Just the WiFi signal bouncing off your body.

I made a video about this last week and it kind of blew up - primarily because people didn’t even think this was possible or long suspected it was; but didn’t think it could get so good.

But YouTube only gives you 11 minutes, and honestly, the rabbit hole goes way deeper than what I could fit in the video. So let’s get into it.

The Basics: Your Router Already Knows You’re There

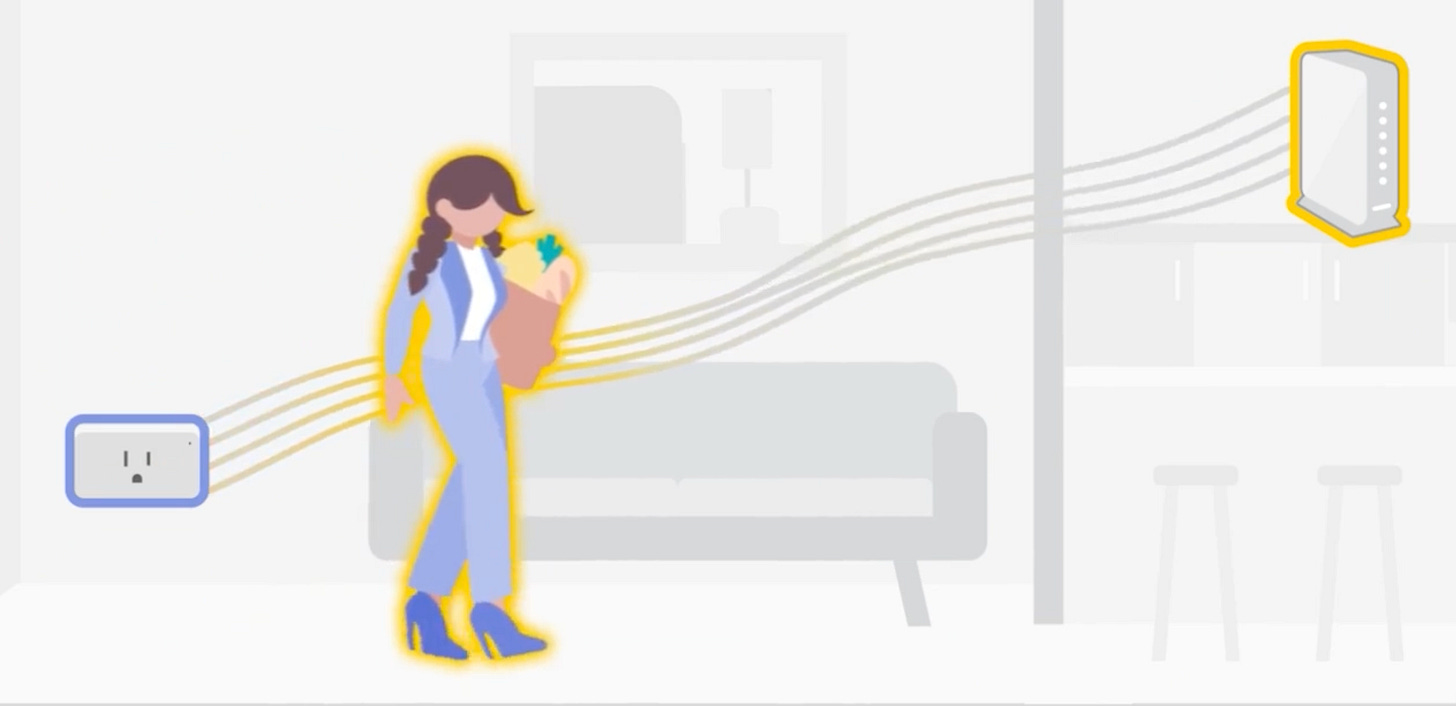

Here’s how this works. Your WiFi router is constantly sending out radio waves at about 5 GHz. These waves bounce off everything -- your walls, your furniture, your body. When you move through your home, those reflections change in ways that are really specific to what moved and how it moved.

Your router already tracks something called channel state information, or CSI. It’s basically how the router optimizes the signal between itself and all your devices. But CSI also encodes the shape and movement of everything between the router and your devices. And that includes you.

Xfinity figured this out. They shipped a feature called WiFi Motion to every customer with one of the newer gateways. No extra cost. No extra hardware. Your existing network essentially becomes a motion sensor. I’ve got this working in my house -- walk into a room, lights turn on.

Companies brand it as “WiFi Motion” or “WiFi Sensing” or whatever sounds less creepy. But the underlying capability is the same. Your router is doing presence detection. It knows you’re there.

If you want the visual version, here’s the video:

Step Two: WiFi Can See Your Skeleton

Motion detection is just step one. And honestly, it’s the boring step.

What follows requires more than your off-the-shelf router -- needing CSI-capable hardware that exposes per-subcarrier signal data. Which by the way is exactly what 802.11bf is about to change.

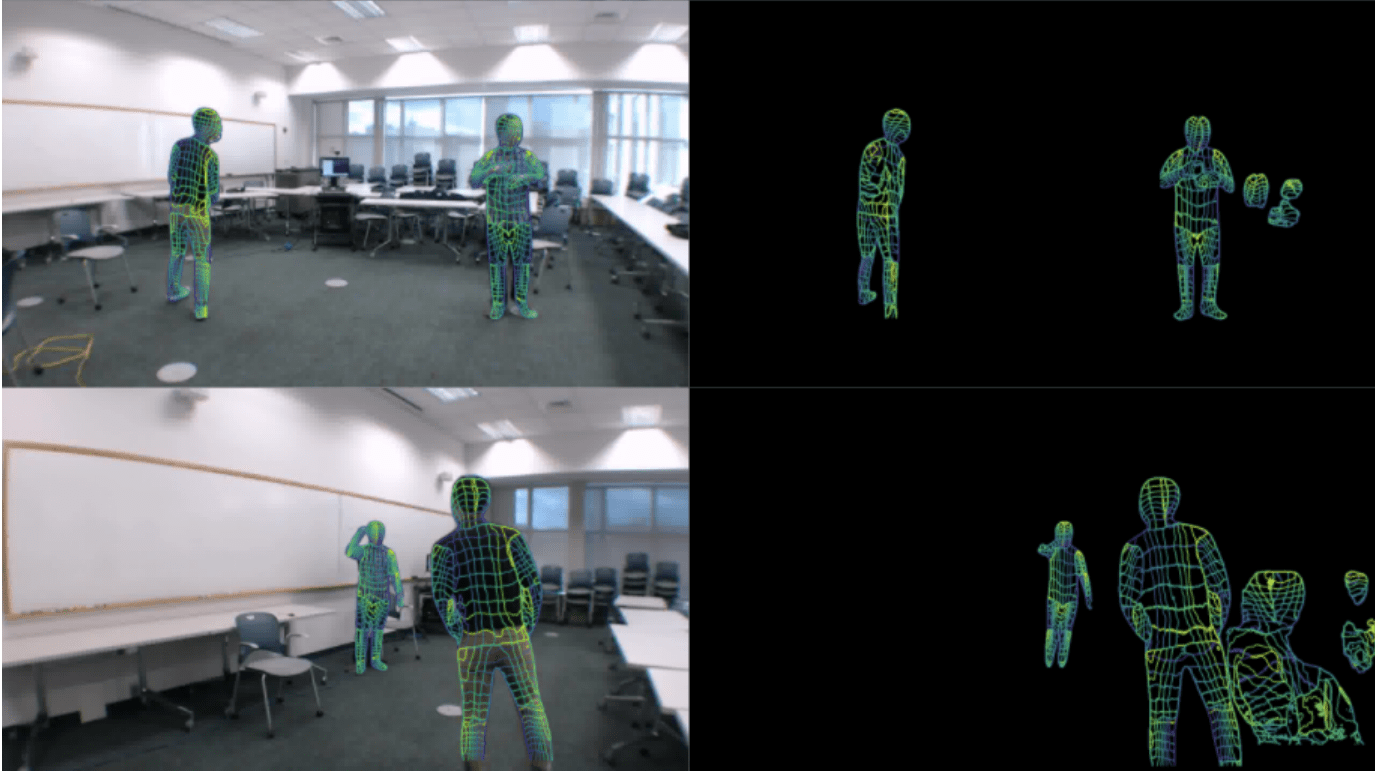

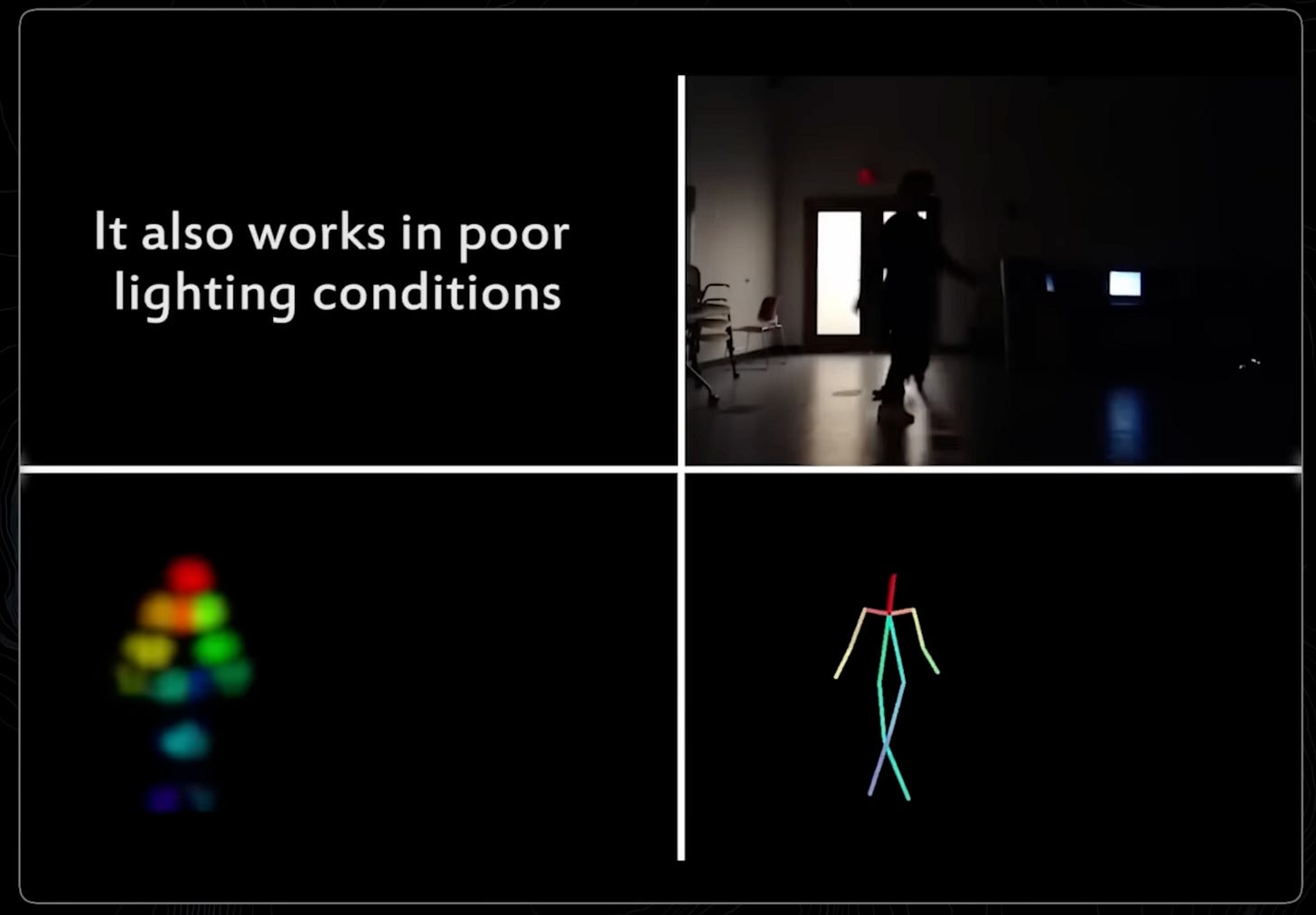

In 2023, Carnegie Mellon University published a paper called “DensePose from WiFi.” Using just WiFi signals from a standard router, an AI model reconstructed full body poses. Not just “someone is in the room.” The actual position of your arms, your legs, your posture. Multiple people. Through walls. No camera.

And it’s gotten better since then. A 2024 paper called Person-in-WiFi 3D did multi-person 3D pose estimation from WiFi. The resolution achieved is kind of nuts -- joint localization errors of about 92mm for a single person -- and that’s approaching what you get from camera-based systems. With three people in the room, it degrades to about 125mm.

Meaning it’s still good enough to know that one person is sitting on the couch while another is standing at the kitchen counter. A January 2025 paper called DT-Pose addressed the cross-domain problem, meaning it works across different rooms and environments.

There's an open-source project on GitHub inspired by the CMU research -- ruvnet/ru-view with over 37,000 stars. It implements the signal processing pipeline and presence detection, though full pose estimation requires CSI-capable hardware like an ESP32 -- commodity hardware you can readily buy on Amazon.

Just to be super clear about what happened here. We went from “someone is in the room” to “here is a real-time 3D skeleton of every person in the room, reconstructed from radio waves, through walls, in the dark.” That’s a massive jump and most people don’t even know it happened.

Step Three: WiFi Knows Who You Are

This is where it gets really interesting. And really uncomfortable.

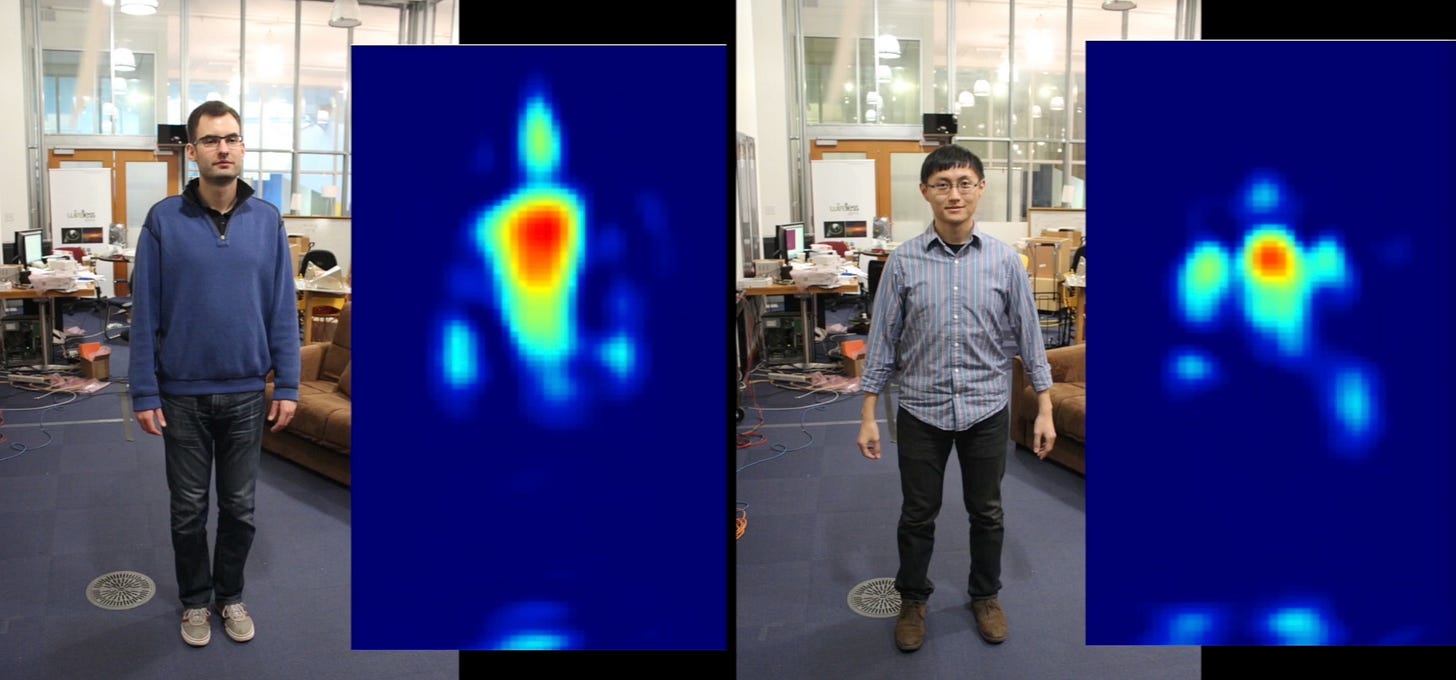

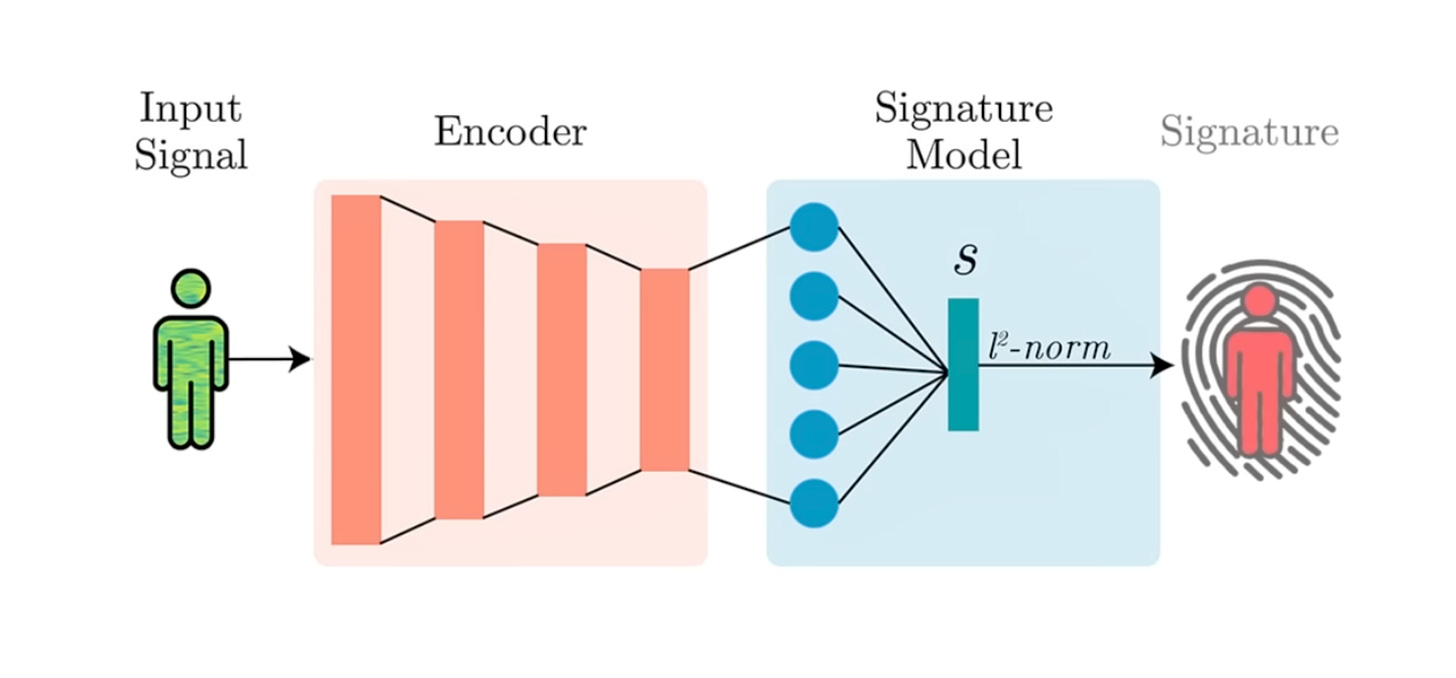

WiFi signals interact with your body in a way that’s unique to you. Your height, your body mass, your bone density, the way you walk, the way you shift your weight, even how you sit -- all of it creates a biometric signature in the WiFi signal.

In 2025 researchers at La Sapienza University of Rome built a system called WhoFi that uses a Transformer neural network to match these signatures to specific individuals. Person re-identification from WiFi. No visual information at all.

But here’s the one that really spooked me. The Karlsruhe Institute of Technology published research in 2025 showing they could identify people with near 100% accuracy across 197 participants. The kicker? They didn’t even need CSI-capable hardware. Their approach uses standard beamforming feedback information -- stuff that’s available on any WiFi device. No special router firmware. No modified hardware. Your off-the-shelf WiFi router can already do this. The researchers explicitly warned about the implications for authoritarian states. The paper was published at ACM CCS, one of the top security conferences.

So let’s stack these up:

Step one: WiFi detects movement. Already shipping in millions of homes.

Step two: WiFi reconstructs your precise body pose through walls. Published research, open-source code on GitHub.

Step three: WiFi identifies the specific person just based on their unique signature. Also published.

We went from a coarse motion sensor to a surveillance system that works in the dark, through walls, on hardware you already own. And the gap between “detect motion” and “identify the person” is a software update. The hardware is already installed.

The Standard Nobody’s Talking About

Here’s something that didn’t make the video because it’s dense and technical, but it’s arguably the most important development in this space.

In September 2025, the IEEE ratified 802.11bf -- the WiFi Sensing standard. This officially makes sensing a native capability of WiFi. Not a hack. Not a research project. A standard.

It defines MAC and PHY modifications for sensing operations across WiFi 6, WiFi 7, and 60 GHz bands. What this means in practice: every major chipset vendor -- Qualcomm, Broadcom, MediaTek, Intel -- is now implementing standardized sensing APIs into their next-generation WiFi chips.

By 2027 or 2028, WiFi sensing will likely be a standard feature of most new routers. Not an add-on. Not a firmware update. Built in from the factory.

That’s the inflection point. We’re going from “researchers demonstrated this is possible” to “every WiFi router on the planet can do this by default.” The infrastructure for ambient sensing at global scale is being standardized right now.

A Billion-Dollar Company Built on This Exact Thesis

A company called ZaiNar emerged in February after nine years in stealth. $1 billion valuation. More than $100 million in funding. The investor list includes Steve Jurvetson from the SpaceX board, the co-founders of Yahoo and Siri, and Skype’s founding engineer.

Nine years in stealth is a long time. What they built is a system that synchronizes existing WiFi and 5G signals to sub-nanosecond precision. Radio waves travel at 30 cm per nanosecond. If you can measure the timing of those signals precisely enough, you get sub-meter positioning that works indoors, outdoors, through walls. No GPS required. No new sensors required.

They’re calling it “the foundational layer for physical AI.” And honestly, that tracks. GPS doesn’t work indoors. Cameras can drift. Nobody has cracked a universal positioning layer for the physical world. ZaiNar is saying that we already have one -- it’s called WiFi and 5G. We just weren’t listening to it correctly.

They’ve filed 100 patents. 90 issued. Zero rejections. Over $450 million in contracts and MOUs. They haven’t named their carrier partners publicly yet, and MOUs aren’t signed deals -- but that investor list and patent portfolio tells you this isn’t vaporware.

Now Scale It to the Battlefield

The same physics applies everywhere. Your WiFi router uses radio waves to sense your body. Defense contractors use radio waves to sense everything else.

Palmer Luckey -- co-founder of Oculus, sold it to Facebook -- started Anduril, which is raising $4 billion at a $60 billion valuation. With a16z and Thrive Capital leading. One of their systems, Pulsar, is an AI-powered electromagnetic warfare platform that passively senses and classifies every RF emission in an area. It identifies what’s producing the signal, geolocates the source, and can deliver an electronic attack. All autonomous. All running on edge AI.

They also deploy autonomous surveillance towers on the US-Mexico border through CBP. Radar, thermal, cameras, all fused together through their Lattice AI platform into a single real-time operational picture. Same principle as your WiFi router sensing your body -- except more modalities and a $60 billion defense contractor behind it.

Now Scale It to Space

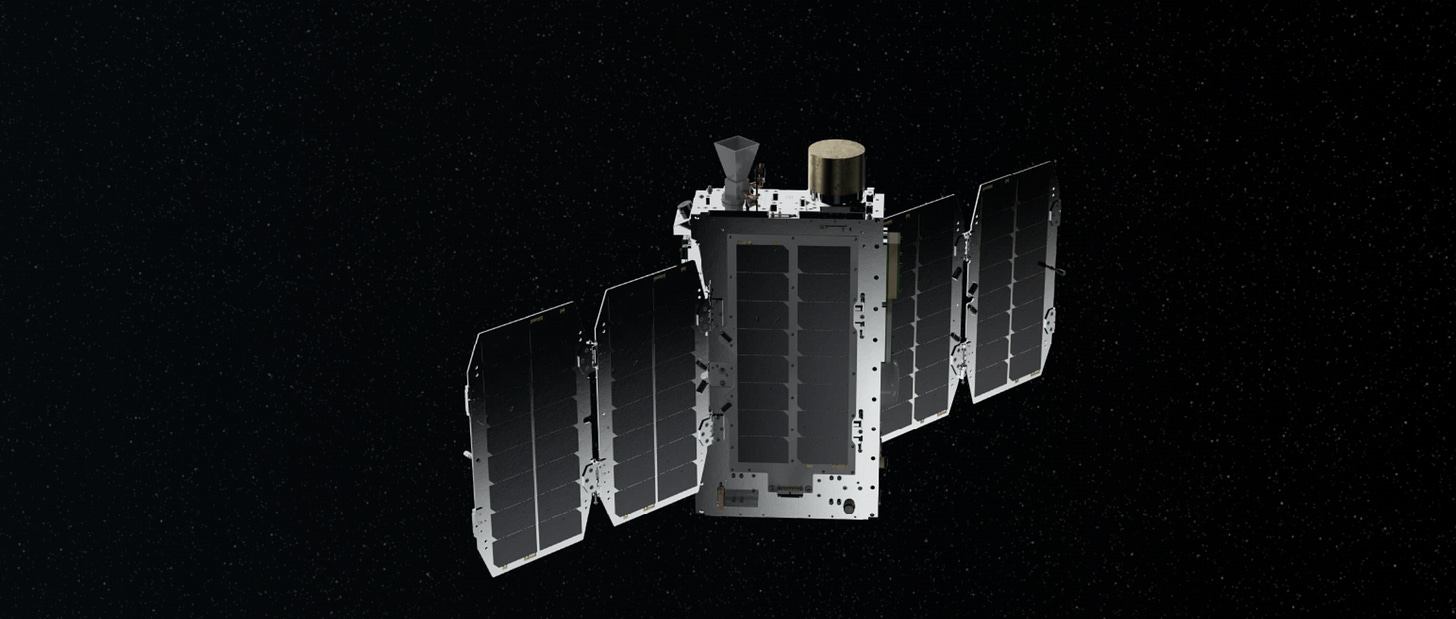

HawkEye 360 has 30+ satellites flying in clusters of three, about 500 km up. Their job: detect and geolocate every RF emitter on Earth. Ship radios, radar systems, GPS jammers, and emergency beacons.

They use it to track dark ships -- vessels that turn off their transponders to hide because they’re doing something illegal. But even if you go dark on AIS, you can’t go dark on physics. The satellites still see the radio signal.

Another company, Spire Global, has over 100 CubeSats collecting RF data from orbit for weather, maritime, and aviation tracking. In essence, there are satellites overhead right now whose entire purpose is to light up every single RF emitter on the planet. Every radio, every radar, every phone is a dot on a map.

That little minimap in your home -- your WiFi router sensing your body -- is effectively global. The same physics, just different altitudes.

The Bigger Picture

These are not separate stories. Your living room, ZaiNar, Anduril, HawkEye 360 -- they all share the same fundamental insight. Radio waves are a sensing medium. They always have been. We just didn’t have the compute and algorithms to make sense of it.

We treated radio waves as dumb pipes carrying data from point A to point B. But every WiFi signal, every 5G pulse, every radio broadcast paints a spatial picture of the physical world.

RF is the next spatial modality. It sits right alongside LiDAR, RGB cameras, and infrared -- except it’s already deployed in every building, on every battlefield, and overhead in orbit. It works through walls, in the dark, in terrible weather, on hardware nobody thinks twice about.

For the physical AI stack, this matters a lot. I spent six years at Google working on the Visual Positioning System -- the tech that uses cameras, LiDAR, and 3D maps to let a device know exactly where it is down to the centimeter. VPS already works indoors and outdoors. But RF sensing is another modality entirely. It could complement VPS as an additional sensor layer, or it could stand on its own. Either way, you’re adding all-weather, through-wall spatial awareness using hardware that’s already everywhere.

That’s what makes this so powerful for physical AI in the real world. Not just AR -- robotics, home automation, logistics, search and rescue, autonomous vehicles. Like, all of it.

The Question We Should Be Asking

The question isn’t whether radio waves become a primary sensing layer. That’s already happening at scale. Most people just don’t know their WiFi can see.

The real question is what we do with it.

It took us years to realize that GPS location data -- aggregated at scale -- was both incredibly useful and pretty scary. We’re about to go through that same uncomfortable period with RF sensing. But we have lessons from the past, and we should use them.

Personally, I think cameras outside your home make a lot of sense. Cameras inside your home are kind of creepy. So if you can use passive RF sensing to figure out who’s in a room and what they’re doing -- without a single camera pixel -- that’s amazing for a lot of applications. Home automation. Elder care. Energy management. Accessibility.

And on the defense side, if you’ve got people in the wild doing bad things and evading authorities, being able to sense them through walls without risking a person’s life is genuinely life-saving tech.

But I keep going back to that scene from The Dark Knight. Batman uses sonar from cell phones to catch the Joker. He builds the system. He uses it once. And then he destroys it. Because some tools are too powerful to leave running.

We’re building that tool right now. In every router, in every 5G tower, in every satellite overhead. The question is whether we’re going to be as responsible as a fictional billionaire in a bat suit.

I don’t have the answer. But I think we should be asking the question -- loudly -- while the 802.11bf standard is still fresh and the infrastructure is still being built. Because once it’s everywhere, we don’t get to un-deploy it.

If you’re a builder, researcher, or just someone who wants to map the frontier -- come hang out. I try to make sense of this stuff every week.

-- Bilawal

Really scary as well as incredible.

Smart people have the ability to see around corners while RF sensing gives anyone the ability to see through walls. Super important analysis as always but this one hits deeper and way different so thank you for putting it all together so cogently.