Inside Palantir's Maven Smart System

How Al Picks 1,000+ Targets a Day -- The OODA Loop Compressed to Real Time

Maven Smart System is at the center of how the US military operates today. The Department of War’s chief AI officer walked through a live demo of it on stage -- the clearest public look at the AI software fusing satellites, drones, and signals intelligence into a single targeting picture.

In the first 12 hours of the Iran war, the US struck nearly 900 targets. Over 13,000 in 38 days. The reason that’s possible is sitting inside Maven. I want to walk through exactly how it works, and then show you how much of it you can actually build with commercial off-the-shelf tools today. Because the applications for situational awareness go way beyond the battlefield.

Watch the full video here:

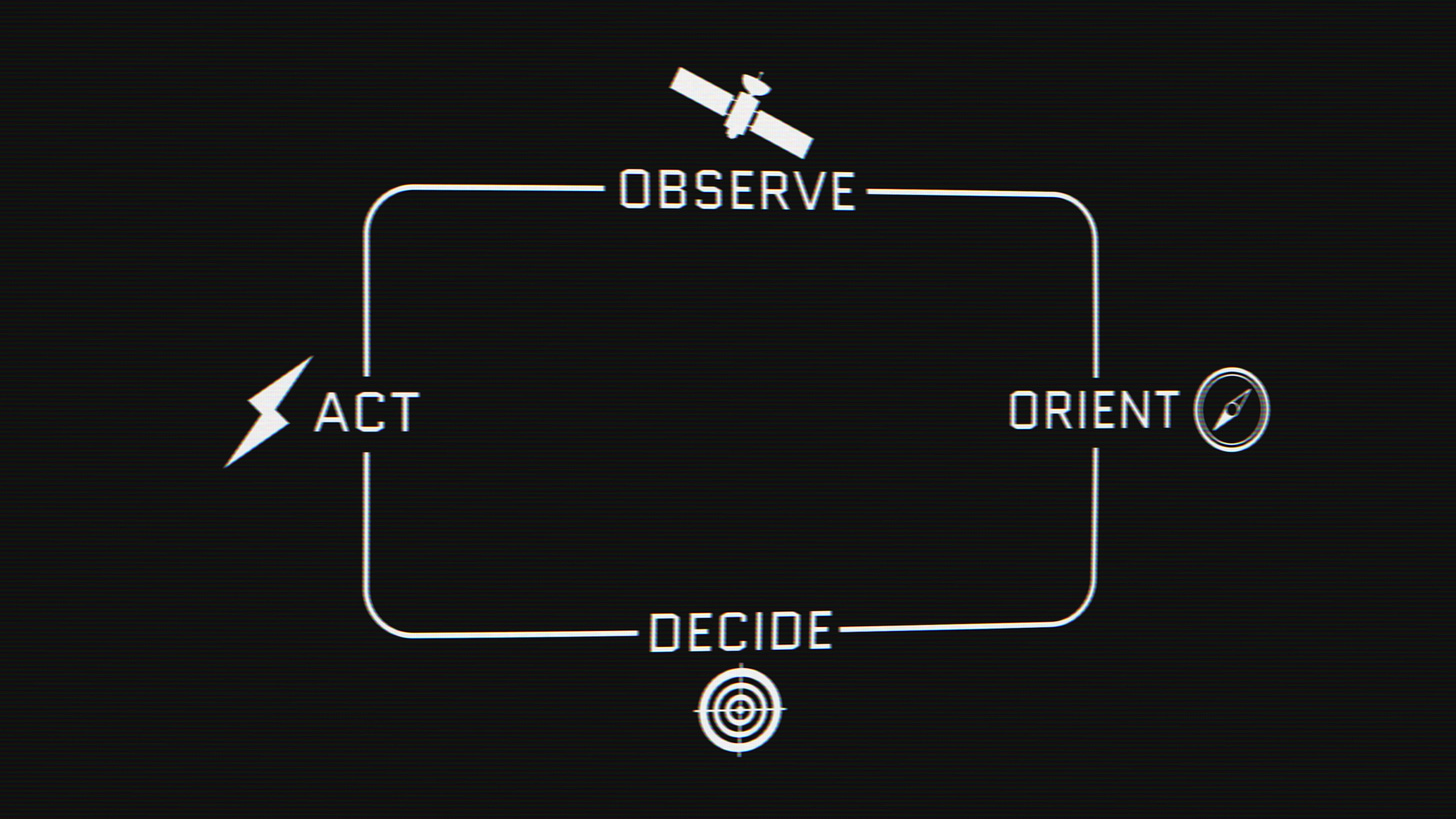

The OODA Loop

There’s a military concept called the OODA loop. Observe, Orient, Decide, Act. Honestly, it’s also what you do when you wake up and decide whether to make coffee or go grab a burrito. The key claim is this: if your decision cycle is faster than your adversary’s, they basically can never catch up. They can’t orient. They can’t plan. They’re permanently in react mode.

Maven exists to compress that loop down to one screen.

To understand why it had to exist, you have to understand what came before it. Adversarial targets were tracked in Excel spreadsheets. PowerPoint mapped network connections between people. Google Earth was for zooming in and out. As one US artillery officer put it to journalist Katrina Manson in her book Project Maven, “We’ve killed more people on Office than you’d ever imagine.”

The modern combatant commander, with every sensor system on Earth at their disposal, was going into battle with Microsoft Office. Maven was built to fix all of that. Basically Google Earth for war -- but with AI telling you what’s actually on the screen.

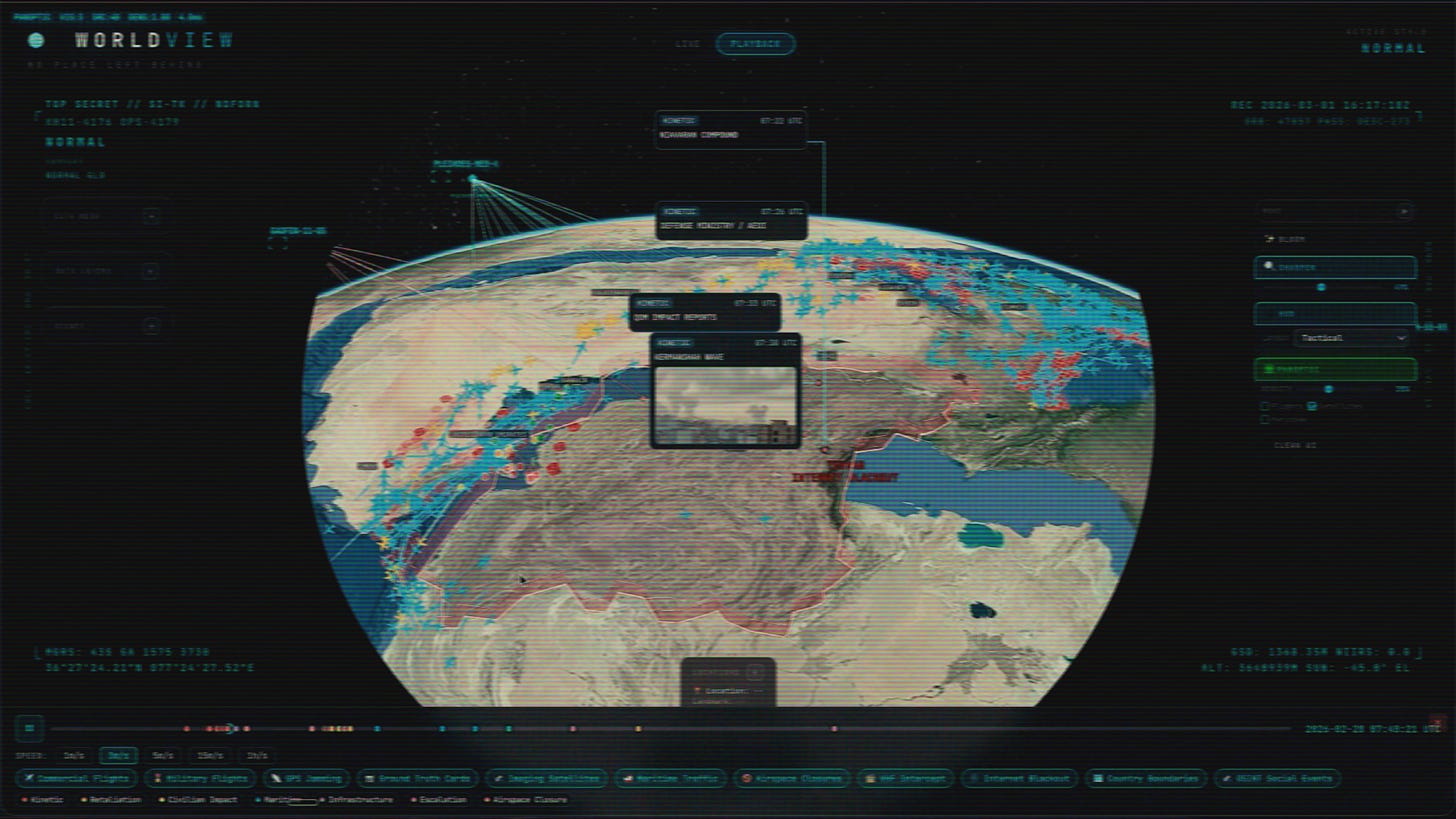

Left Click, Right Click, Left Click

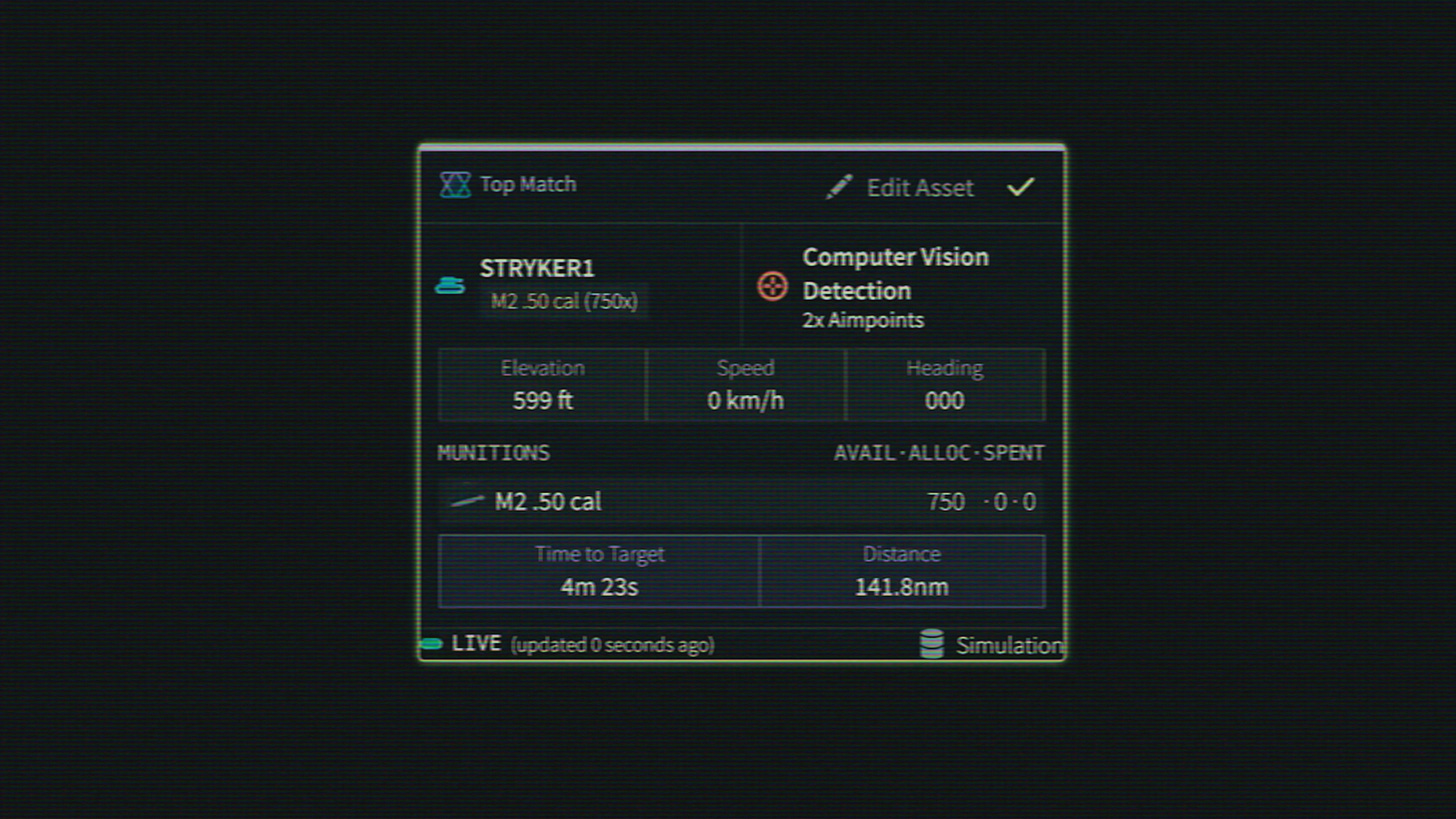

What Palantir built starts with a fused map view -- feeds from satellites, drones, signals intelligence, and prior map data, all layered together on a globe. Aerial assets in the area are visible at a glance. Different target designations sit overlaid on the same surface. Road network data can be toggled in to plan exactly how to reach any point of interest.

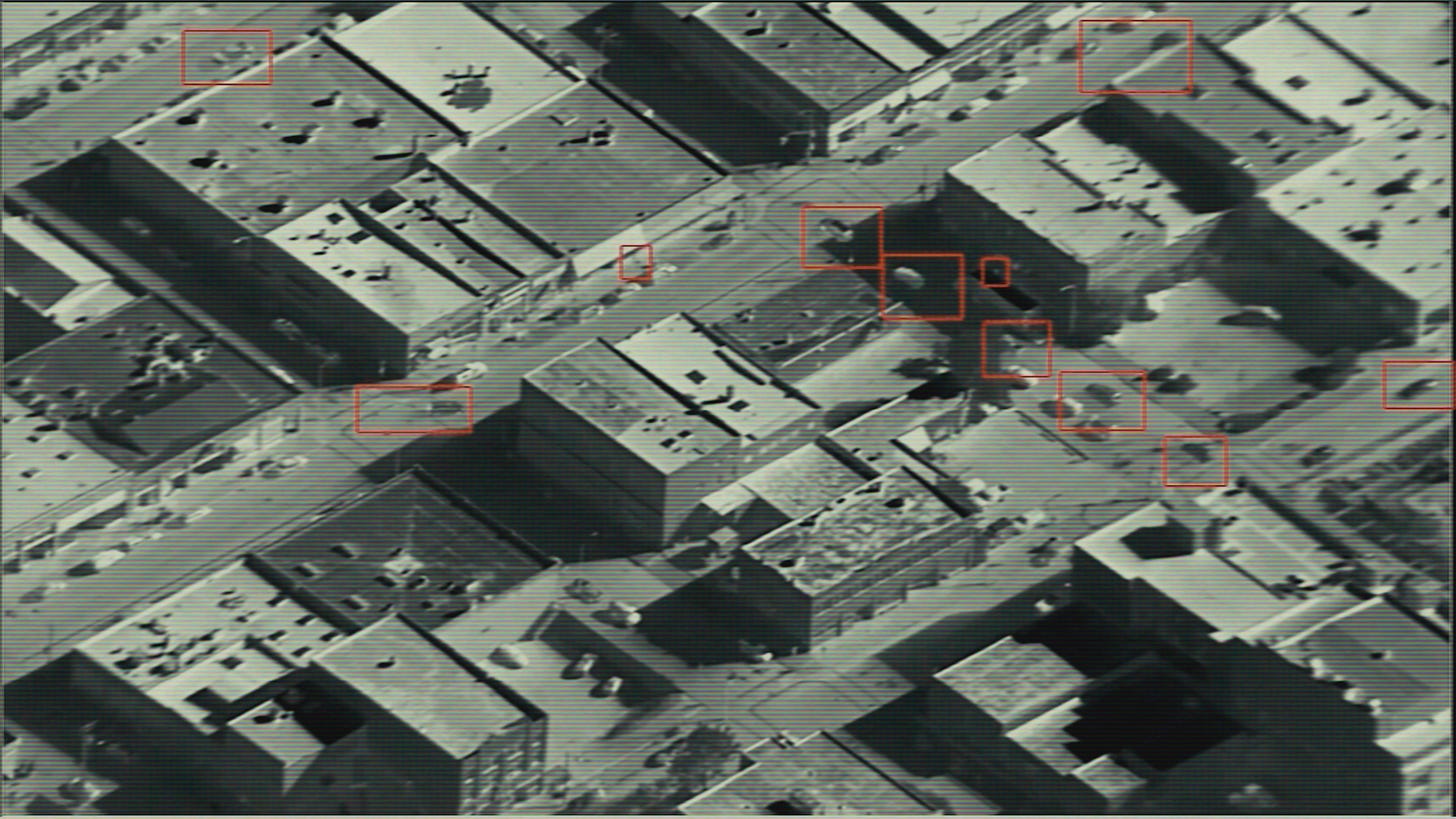

At ground-level resolution, the map populates with dots -- detections from computer vision. Each one carries a stable identifier number that follows it across modalities. The same vehicle in a satellite view, a drone view, and a CCTV feed all keep the same ID. That’s how the system knows it’s the same target across feeds.

Detections get nominated to a literal Kanban board -- like a task tracker, but with vertical columns representing the different processes that different teams used to run separately. From the board, the system tasks the right asset to execute the target, optimizing across criteria: time to target, fuel, munitions, and distance. It spits out a recommended plan, a human approves it, and then it executes.

Or as the chief AI officer put it: “Left click, right click, left click. Magically, it becomes a detection.”

This is the part the Department of War loves. Maven takes something that used to require a room full of analysts generating courses of action and collapses it into a single screen, pushing everyone onto the same surface. From detection to strike decision, one system.

Map that to OODA. Sensor feeds coming in are Observe. Fusing and classifying is Orient. Kanban + course of action is Decide. Approving and executing is Act.

The Anthropic Wrinkle

Here’s the part that’s complicated and worth sitting with.

Based on public reporting, the AI doing the natural-language intelligence queries inside Maven is Claude, built by Anthropic. Anthropic was one of the first to deploy a large language model in a classified military setting. The Washington Post reported that Claude is “central to the US campaign in Iran.”

But Anthropic drew a line. Their position: you can use Claude as long as it’s not deployed for fully autonomous weapon systems or mass domestic surveillance. The Trump administration responded by declaring Anthropic a “supply chain risk.” The argument from the administration: you’re a contractor, you provide a tool, you don’t get a say in how it’s used.

When you combine that with reporting that suggests Maven is running Claude 3.5 Sonnet -- not even the latest model -- it raises a real question about whether these systems are actually good enough to deploy at this scale. The AI company that brought the first LLM to a classified setting is now in an open fight with the customer over how the tool is allowed to be used. Recent reporting suggests a deal can still be struck. But the tension is real, and it’s inside the system right now.

What Maven Sees

Maven is only as good as the data it can see. Let me walk through the inputs.

Spy satellites in orbit -- keyhole-class systems plus the next generation SpaceX is building. Synthetic aperture radar from commercial constellations like ICEYE and Capella Space -- radar pulses through cloud and darkness returning 25-centimeter resolution imagery of the ground. The same dual-use tech that helps determine whether a bridge is about to break is now feeding kill chains.

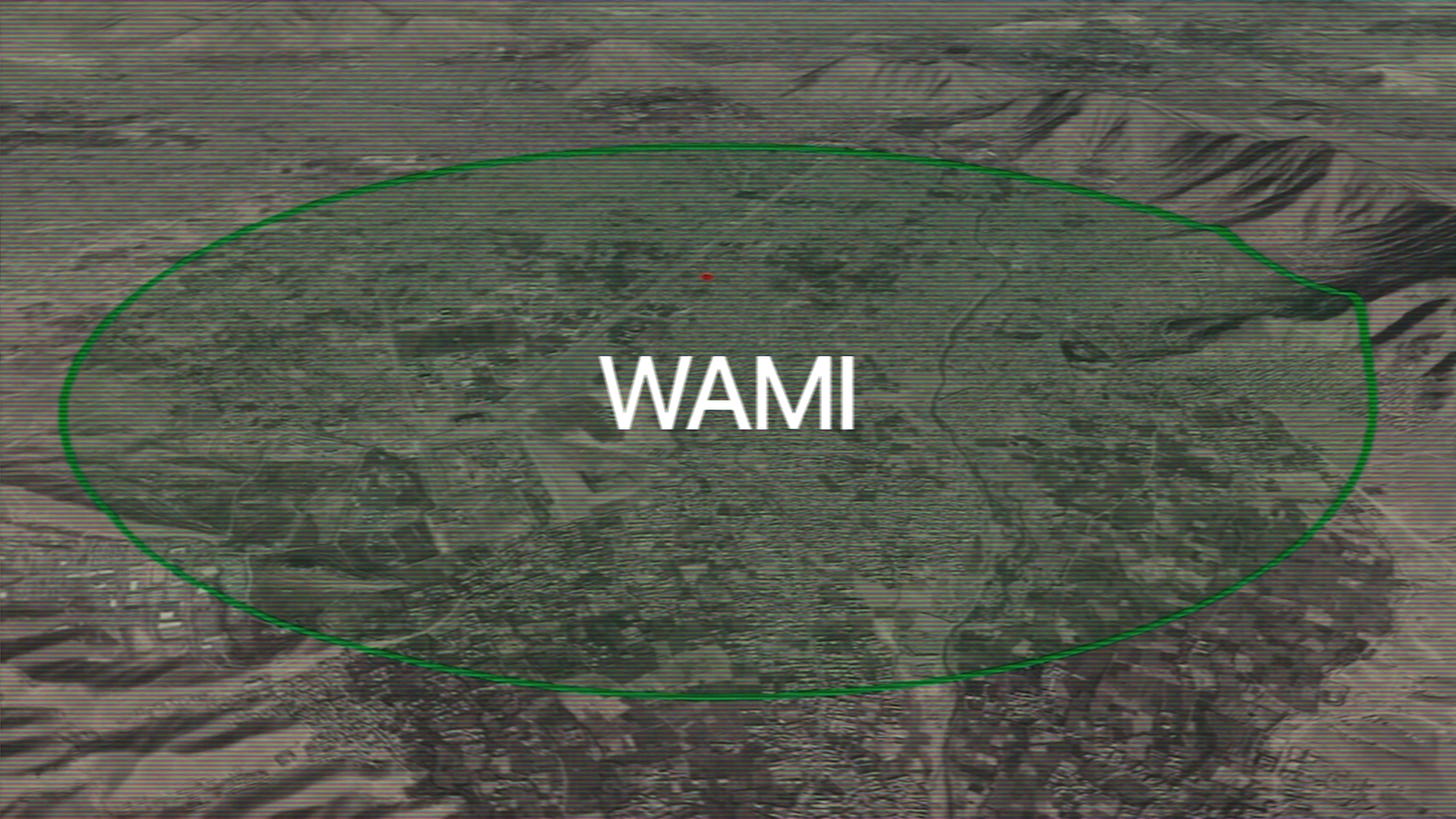

Then there’s the stuff most people don’t know about. Wide Area Motion Imagery -- WAMI. The original system, Gorgon Stare, mounts on MQ-9 Reaper drones and stitches 368 cameras into a 1.8 gigapixel composite that surveils an entire small city from 25,000 feet. Think Google Earth Live. The program was reportedly inspired by Enemy of the State, which is freaking wild. Once you have a persistent image of everything, you can rewind to figure out where any car or person originated from. The next-gen version, ARGUS-IS, covers 36 square miles with enough resolution to track individual pedestrians.

Drone full motion video -- Reapers streaming live feeds for real-time object detection. And in GPS-denied environments -- which Iran absolutely is, with constant jamming and spoofing -- drones still navigate. Vantor‘s Raptor system matches the onboard camera feed against their Precision3D terrain model, built from 30-centimeter satellite imagery. The drone doesn’t need GPS at all. It knows where it is from the terrain itself. Same visual-positioning principle we’ve covered on the channel before, applied to a Reaper.

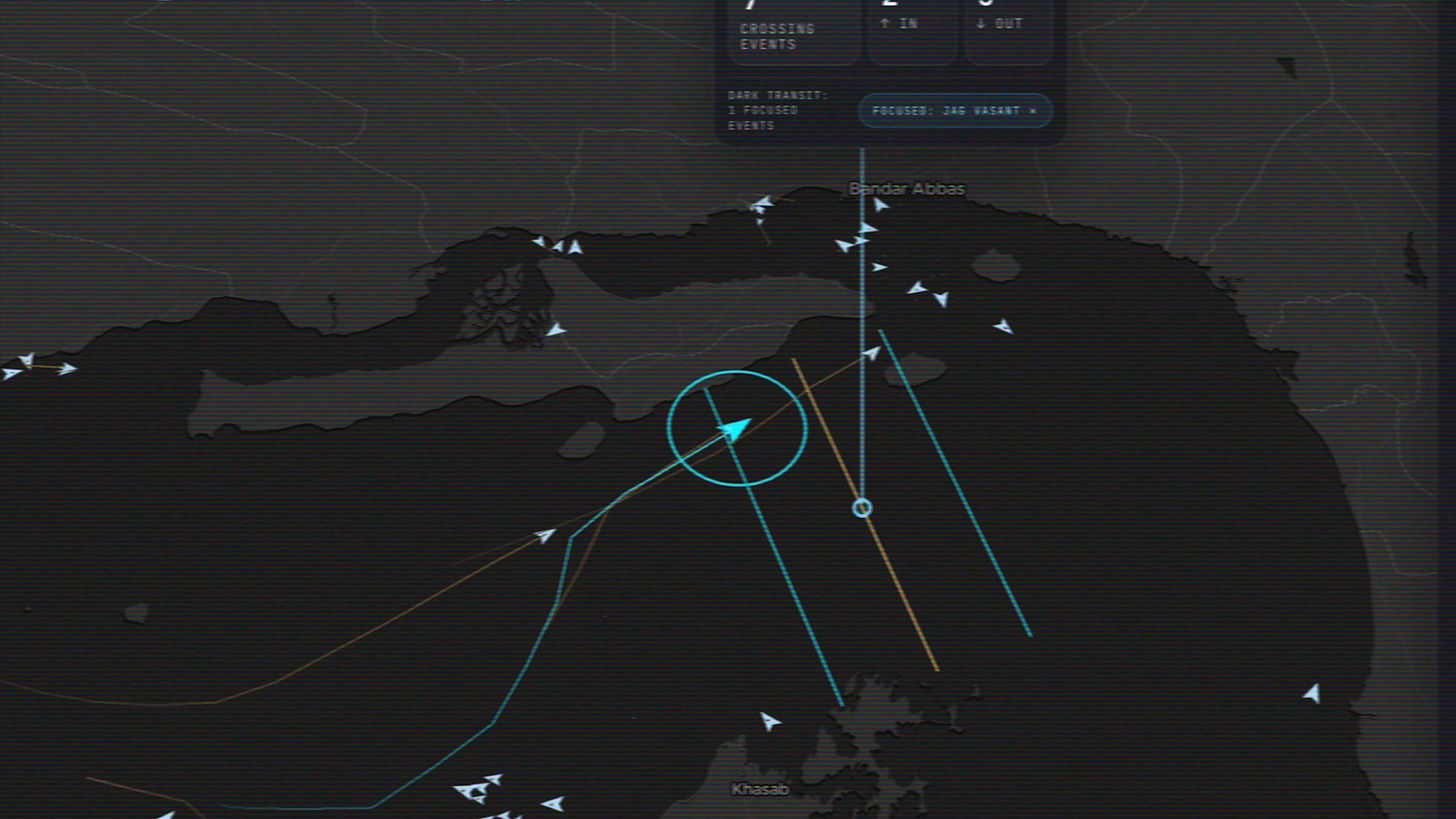

Signals intelligence -- intercepted comms and electronic emissions integrated alongside the imagery. RF satellite constellations like Spire for dark-vessel detection. Entire shadow fleets repaint hulls and turn off their AIS transponders -- but they don’t escape, because the people on board have devices with Candy Crush installed. Advertising intelligence -- the ad-network data from those phones -- is enough to geolocate the ship. You can obfuscate every other signal. You cannot obfuscate the cluster of devices belonging to people who like a free game.

And then the secret one -- the RQ-180, America’s most classified stealth reconnaissance drone. Publicly exposed for the first time during this war when one emergency-landed at an air base in Greece in March. A flying wing believed to operate at 60,000+ feet for 24-hour autonomous missions, likely tracking Iran’s mobile missile launchers -- the kind of targets that move, hide, and require persistent overhead coverage to catch.

All of it gets fused. That’s the Observe step. Maven sees everything. The question is what to do with it.

Orient, Decide, Act -- At Scale

This is left click, right click, left click happening at war.

Operation Epic Fury kicked off on February 28. In the first 12 hours, the US struck nearly 900 targets across Iran. Maven was generating prioritized target lists faster than humans could review them. Orient and Decide effectively disappear when the system pre-computes both.

The most dramatic single operation was Kharg Island on March 13. Over 90 military targets simultaneously -- naval mine storage, missile bunkers, air defense systems -- in what Trump called “one of the most powerful bombing raids in the history of the Middle East.” The oil infrastructure was deliberately left intact. To coordinate 90+ simultaneous strikes, Maven generates pre-packaged target sets with weapons assigned and legal sign-offs already in the system. The whole thing executes in parallel.

But there’s a cost to speed. When you’re processing thousands of targets in 24 hours, what gets missed? And when something gets missed, who’s at fault? The model maker? The sensor data? The map underneath that incorrectly classified a school as a military facility? These are not academic questions anymore.

The Department of War’s stance right now is clear: no fully autonomous weapon systems, every decision has a human in the loop. But think about self-driving cars. At some point, the system gets accurate enough that it surpasses the average human driver. People still die. Now compress that timeline and apply it to a kill chain processing thousands of decisions a day.

This isn’t just Palantir, by the way. Anduril has Lattice -- same concept, different platform. The shared idea is the common operational picture: a single fused view of the battlespace where AI handles Orient and Decide.

The Same Tech, Off the Battlefield

Here’s the part most people miss. The same Palantir technology running Maven also runs the UK’s NHS data infrastructure, logistics for the World Food Programme, Airbus’s supply chain, oil-and-gas optimization, and money laundering detection at major banks. Same OODA loop. Same fusion layer. Different problems.

That’s the bigger story. The civilian version of this exists in pieces, and most of those pieces are now off-the-shelf.

Observe -- the sensing layer. You can buy commercial satellite imagery. SAR is commercial. Plane and ship tracking data is accessible. Japan has city-scale CCTV networks you can query. You can fly your own drones. You can buy robots. Social media ad-tech data is purchasable.

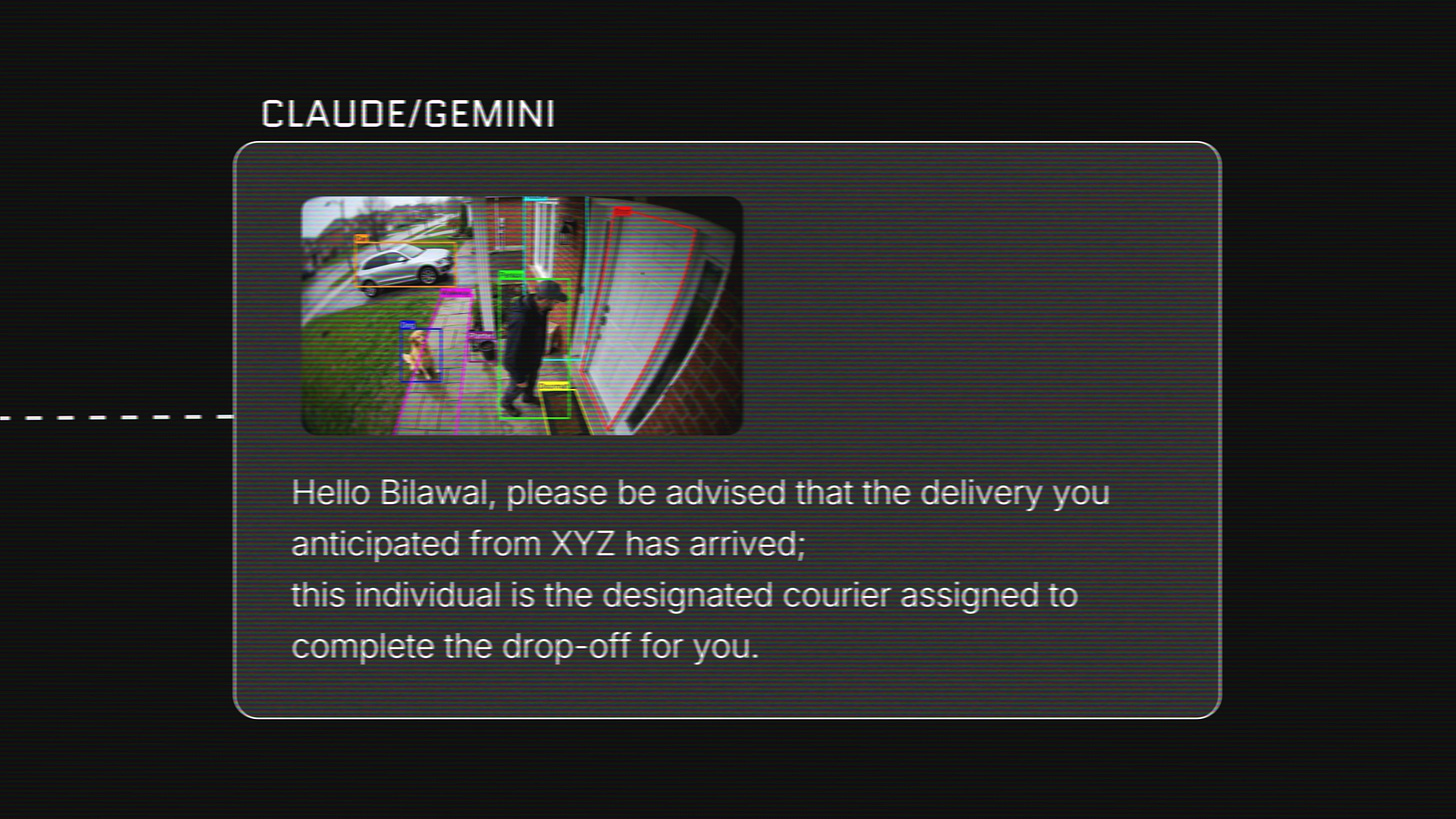

Orient -- classification and fusion. This is exactly what computer vision and large language models do. Point Meta’s Segment Anything at a security camera feed, then hand the structured output to Claude or Gemini to tell you what those things are doing in context. Check whether the person at your door is the Amazon driver you were expecting. The AI layer that Maven uses to make sense of everything is available to anyone with an API key.

Decide -- course of action generation. For the military it’s “recommend a weapon and an asset.” For everyone else it’s “what should I actually pay attention to right now? What changed? What’s anomalous?” Surface that automatically -- not a barrage of generic alerts that say unknown person detected at 3:42 AM and tell you nothing.

Act -- for the military it’s kinetics. For us, it’s decisions made in near real time on what’s happening in the world and what’s happening in our own lives.

I’ve been building toward both. The first surface is God’s Eye View -- fusing open-source intelligence into a common operational picture. What you’ve already seen across the Epic Fury and Strait of Hormuz coverage. That’s getting a lot more capability now that there’s a team behind it.

The second surface is Argus -- situational awareness for your own life. Your data, your cameras, your feeds, your context, fused into the same kind of unified view. Not surveillance. Awareness of what’s happening in your sanctum sanctorum. Private by default, optionally shareable. The map of the world plus your own island that you control.

You can buy all the sensors. The AI is commercial. The only piece that doesn’t exist for civilians yet is the fusion layer -- the thing that pulls it all into one picture. That’s what we’re building.

Where This Goes

Observe. Orient. Decide. Act. That’s how Maven works. That’s how every common-operational-picture platform works. And honestly, it’s how good decision-making works in general -- you’re just doing it slower without the tools.

So that’s where I’m going next. God’s Eye View as the window to the world we all deserve. Argus as the same framework turned inward. Same OODA. No kinetics. Just clarity.

If you can’t wait -- point your AI of choice at this post and the YouTube transcripts, and you can build a decent first-pass viewer yourself today. That’s also part of the point.

One quick thing -- Adobe 99U in NYC, May 11

I’m going to be on the main theater stage at Adobe 99U on May 11 in NYC, talking about how creative work is changing in the era of world models and generative cinema.

Adobe gave me 5 free tickets to give away to this community. First-come, first-served, redeem at: event.adobe.com/adobe99u/BilawalSidhuGiveaway. Deadline is May 8.

If you don’t grab one of the 5, there’s still a 20% off code for the public ticket: adobe.ly/99U, code Bilawal20.

If you’re going to be in New York that week, come say hi.

If this gave you something to think about, share it with someone who should see it. The next phase of all of this is happening fast.

-Bilawal

Many thanks for yet another thought-provoking master post, Bilawal, I'm upgrading to paid today! When you say 'The only piece that doesn’t exist for civilians yet is the fusion layer -- the thing that pulls it all into one picture. That’s what we’re building', do you mean that you (and a team?) are building a commercial product for the tech-challenged?